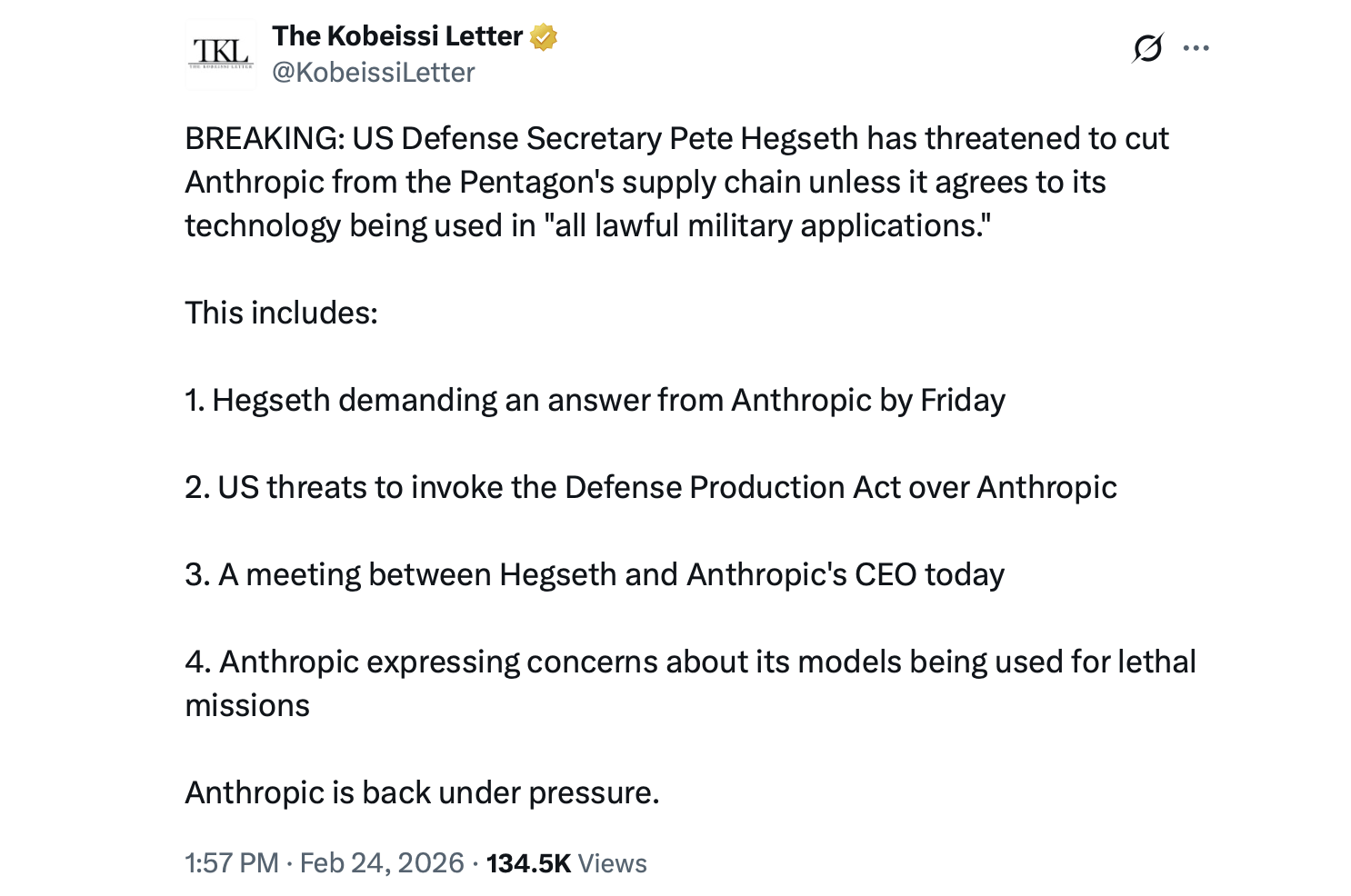

Defense Secretary Pete Hegseth decided to have a little “come to Jesus” meeting with Anthropic CEO Dario Amodei at the Pentagon this Tuesday. Why? Well, the Department of Defense is not exactly thrilled with the restrictions on the Claude AI system. If Claude doesn’t play by the Pentagon’s rules, there may be some hefty penalties. And I mean hefty.

Military AI Governance: A Reality Show You Didn’t Know You Needed

On February 24, Hegseth and his crew-Deputy Secretary Steve Feinberg and General Counsel Earl Matthews-sat down to hash out some of the “minor” disagreements about Claude, the fancy large language model that’s basically the Pentagon’s best-kept AI secret. It’s got a pilot contract with a $200 million price tag, and it’s the only AI that’s officially allowed to mingle with sensitive Pentagon systems. But that’s where the drama begins.

According to Axios reporting, Hegseth and his team took issue with some of the rules Anthropic has slapped onto Claude, especially when it comes to mass surveillance of Americans and fully autonomous weapons that might take a vacation from human oversight. Apparently, these restrictions are making it harder for the military to do, well, military things. And according to them, that’s not how democracy works.

Anthropic, of course, insists that these “restrictions” are really just a way to prevent chaos, like tracking random people or unleashing lethal systems without a human touch. Because, you know, maybe having a robot decide who gets to live and who gets to be vaporized is not exactly a great PR move.

“Anthropic’s never had a problem with using its models for legitimate military operations,” Fox News’ Jennifer Griffin reported on X. She added that Anthropic assured Hegseth they weren’t whining about the model’s use during the infamous Maduro raid. But hey, a little clarification never hurt anyone, right?

Claude, it turns out, isn’t just sitting around looking pretty. It’s been used for intelligence analysis, cyber defense, and planning. There was even a report that it helped with the operation that captured Venezuelan President Nicolás Maduro. But when Anthropic started questioning its own involvement in the operation, Pentagon officials got a little twitchy. Was that a complaint, or just a little corporate soul-searching?

And don’t forget that memo Hegseth sent out on January 9, where he urged AI companies to stop acting like protective parents when it comes to military contracts. This eventually led to the February 24 meeting, where the gloves were off, and the stakes were high.

Now, the Pentagon’s given Anthropic until February 27 to rework its terms, or else the Defense Production Act might be brought into play. That could mean the Pentagon gets a bit more aggressive, and not in the fun “let’s-join-a-pizza-party” way. They might even have to start looking at alternatives. Don’t worry, though-competitors like xAI, OpenAI, and Google are all hanging around, waiting to step in if things go south.

The whole situation also brings up the big question: Can private AI developers really be trusted to maintain their own ethics when their systems are now tangled up in national security? Because, you know, nobody really trusts the ethics of anyone with a massive tech budget and a fondness for shiny robots.

And the social media reactions? Oh, they’ve been priceless. One person on X joked that “warrantless surveillance is back.” Another, with a slightly darker sense of humor, said, “Skynet is here… the 2029 timeline is LIVE.” I mean, if we’re going down, let’s at least get some good memes out of it, right?

So, will Anthropic adjust its AI safeguards before the deadline? Or will we see the Pentagon look for a new AI BFF? Stay tuned, because this might get real interesting.

FAQ 🤖

- What was discussed at the Feb. 24 Pentagon meeting?

The meeting was all about whether Anthropic’s restrictions on Claude AI are getting in the way of military operations. Big surprise, right? - Why is the Pentagon pressuring Anthropic?

Because the military wants to do its job, and it feels like Claude’s safeguards are slowing down its national security operations. Imagine trying to run a race with a sloth. - What could happen if Anthropic doesn’t comply?

The Pentagon might flex its muscles and use the Defense Production Act to force compliance. Or, they could just declare Claude a risk to their supply chain. Ouch. - Are there alternatives to Claude?

Oh yeah. There are plenty of other AI models like xAI, OpenAI, and Google waiting in the wings. It’s like the military’s AI dating app.

Read More

- What Song Is In The New Supergirl Trailer (& What It Means For The DC Movie)

- Why is Tech Jacket gender-swapped in Invincible season 4 and who voices her?

- The Super Mario Galaxy Movie: 50 Easter Eggs, References & Major Cameos Explained

- Sydney Sweeney’s The Housemaid 2 Sets Streaming Release Date

- Highly Anticipated Strategy RPG Finally Sets Release Date (And It’s Soon)

- Dune 3 Gets the Huge Update Fans Have Been Waiting For

- TV legend Carol Kirkwood reveals the reasons why she decided to retire after 28 years with BBC

- The Quantum Observer: How Reality Takes Shape

- We Need More Open World Games to Feature This Genre

- Jaleco Sports: Bases Loaded II announced for PS5, Switch; now available

2026-02-25 04:27