Just what sort of GPU do you need to run local AI with Ollama? — The answer isn’t as expensive as you might think

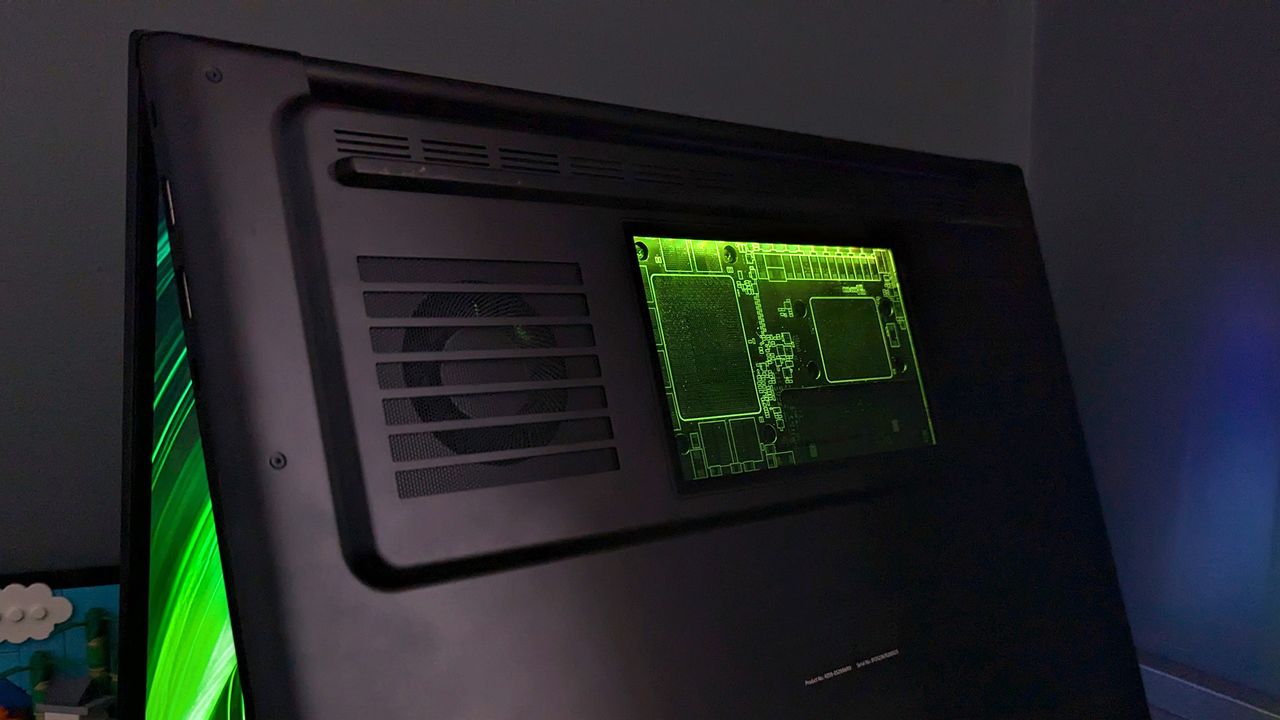

Using Ollama for experimenting with LLMs on your personal computer is straightforward and widely preferred. However, unlike using ChatGPT, a robust local system with powerful hardware is essential due to its current support for dedicated GPUs only. If you’re using LM Studio with integrated GPUs, you’ll still require a decent machine for optimal performance.